PRESS AND NEWS CENTER

MPC’s Logan Brown Describes how VFX Production Assets were Leveraged for the Development of “Alien: Covenant in Utero,” a virtual reality experience

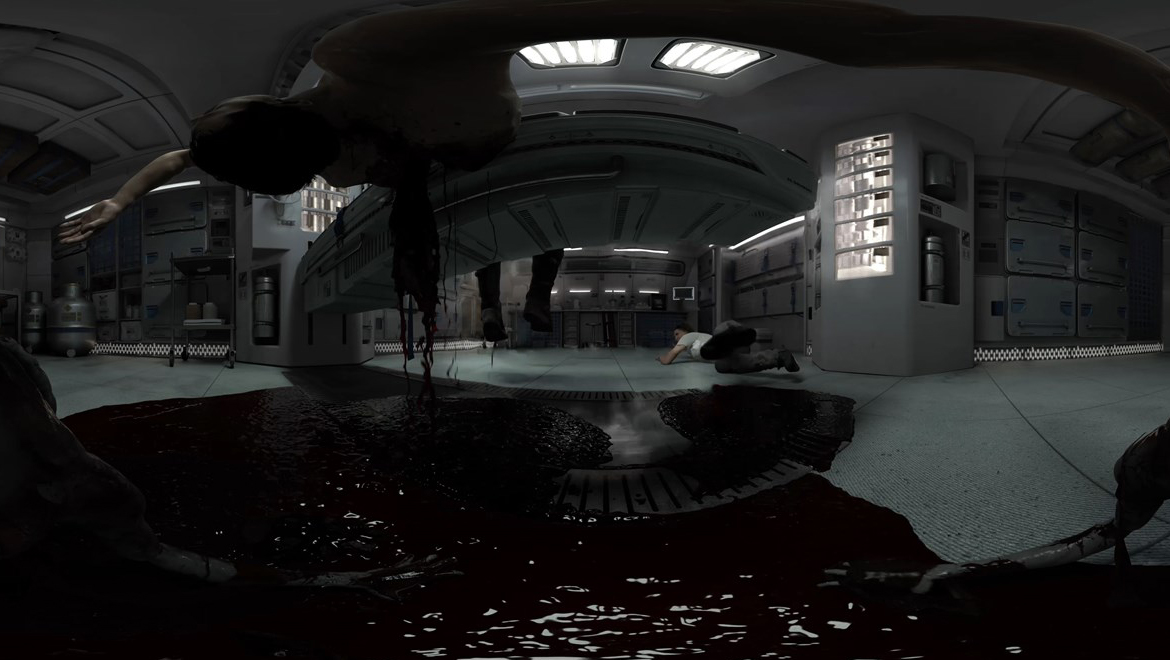

- Twentieth Century Fox, FoxNext VR Studio, RSA VR, MPC VR, a Technicolor company, Mach1 and technology partners AMD RADEON and Dell Inspiron today announced the release of ALIEN: COVENANT In Utero, a virtual reality experience.

- MPC was tasked with developing a 360-degree virtual reality experience that was true to the nearly 40-year narrative of the ALIEN franchise, while preparing fans for the upcoming “ALIEN: COVENANT” movie prior to the theatrical release. Because of the global appeal of the ALIEN franchise, it was important to make the experience available on both tethered and mobile platforms in a wide variety of languages.

- The project presented some unique challenges because it was undertaken in parallel with production of the movie, which required close collaboration with the production team to ensure the VR experience was visually consistent with the movie.

- The Technicolor Experience Center was tapped to standardize and support the different techniques for encoding 360-degree immersive video, which required production of multiple versions of the immersive experience.

- The project has been a collaboration between FoxNext VR Studio, RSA VR, MPC VR, a Technicolor company, and technology partners AMD RADEON and Dell Inspiron.

MPC Talent Accessed Resources at the Technicolor Experience Center to Bring 360-Degree Video to Market Ahead of Theatrical Release of “ALIEN: COVENANT”

US Head of VR and Immersive Content at MPC Film and Executive Producer at the Technicolor Experience Center, describes the challenges of a novel project: creating a 360-degree video virtual reality experience based on the latest installment of the Alien movie franchise, prior to the theatrical release.

Through the development process of this virtual reality experience, MPC has utilized both AMD RYZEN and RADEON technologies based within DELL Inspiron systems.

Logan, you have been involved in a very interesting project, “Alien: Covenant in Utero,” an immersive 360-degree video to be released in advance of the latest installment of a revered franchise that coming into its fourth decade. From your VR and AR perspective, tell me what audiences can expect, prior to the launch of the next installment of this film saga.

Brown: We were tasked with creating a 360-degree video for ALIEN: COVENANT. We needed to create an immersive experience that would connect with the film. The goal was to take the audience into the world of the film and the idea was to use actual film assets to create as much continuity as possible between the film and the 360-degree video.

We have recreated a scene from the movie to provide the audience with a never-before-seen perspective that is only possible in the world of immersive media and VR experiences.

That must be a big responsibility given the ongoing long-term relationship audiences have with this series of movies. What were the key creative challenges you had to face in addressing some of the concerns and issues that you have just described?

Brown: Because we were using actual film assets, we had to work hand in hand with the film production to make sure that the builds, the assets, the animation, and even the look and the lighting were consistent between both projects.

Therefore it was important for us to be organized and maintain a tight connection with the film production in order to be able to execute the build in time to release it prior to the film’s release.

That was one of the key creative challenges and one of our biggest concerns as we attempted to stay true to the look and feel of the Alien world that everyone is so familiar with.

This immersive experience will come out prior to the movie. That had to create some interesting challenges in terms of meeting deadlines. Can you describe how the decision was made and what that meant in terms of leveraging assets from the production into your immersive experience?

Brown: The good thing about this form of VR is that it uses prerendered video so we were able to use the same physical assets as the movie. But when you are dealing with creative decisions in the production process, you sometimes have to think about something from a perspective that has not been told in the film.

There were a couple of new ways of looking at the world that had to be considered, and it was important that the director Ridley Scott of the film be involved to vet some of these ideas and some of the concepts we were playing with for the immersive media piece, in order to have that overall vision remain consistent.

How did you leverage those assets? Were you shooting in tandem? What were some of the technical and operational issues you had to contend with to get this together, since the production happened in parallel?

Brown: Yes, it did happen in parallel. When you are dealing with a 360-degree experience there are going to be things that the audience misses, different elements that would not necessarily be noticed, and you also have to consider the fact that the audience can look wherever they want, so it is really important to address all areas where the viewer is capable of looking.

That obviously puts more strain on production. The scope of responsibility for the artist is much greater in these circumstances. It was important that we are able to view the work in progress on a VR headset so we are able to replicate the user experience and make sure we are addressing all the areas that people would see.

Also, it is important that we train the viewer’s eye and that we guide them through the experience, because there are sometimes things happen behind them.

The question for us— which does not have to be addressed in a traditional 2D movie — revolves around how to move the audience’s perspective and guide them towards the elements of the narrative that need to be told.. A lot of times we are addressing this with spatialized sound or possibly by drawing the eye towards visual elements.

Those were some of the areas we really needed to take into consideration for this particular medium.

MPC was very involved in the visual special effects for this next film installment. How important was it to have VFX integrated into the production of the virtual reality experience?

Brown: It was fantastic from the point of view that we had access to the same artists who worked on the visual special effects for the film. There is no better way in my mind to provide visual and artistic continuity than to use the same artists.

That is one area were MPC wanted to be opportunistic and add as much of the film’s tone and look as possible to the 360-degree piece. And because it was coming through our VFX pipeline it was much easier for us to replicate the look and the feel of the lighting in the film and bring that into the 360-degree video.

Putting your Technicolor Experience Center hat on, what role did the Center play in bringing together the different partners?

Brown: The Technicolor Experience Center strives to be on the cutting edge of VR technology. It is a place where we try to bring together software, hardware and creative partners and explore the areas that need more technical development in VR.

For this project, in particular, the Center provided great partnerships with Vicon and AMD. AMD provided hardware for the encoding and compression part of the process for the 360-degree video, which happens at the Technicolor Experience Center.

Encoding and compression is a really interesting area that still lacks standardization and development for 360-degree video. We are very keen to explore this because it has such an impact on the end user experience and we feel that standards and practices will have to be developed further in order to provide consistent high quality results across the broad range of devices that are now available to consumers. That is an area where we believe Technicolor can take the lead and provide some community support.

Additionally we were lucky to work with Vicon for the motion capture component of the project. The result added a lot of detail and authenticity to the character movements and we were able to place the tracked image of the motion captured actor inside the actual movie sets taken from the Lidar scans in order to preview our final scenes on set. It was really helpful to be able to accurately block the scene, to anticipate postproduction challenges ahead of time and maintain our really tight delivery schedule.

You mentioned the broad range of platforms that are out there. When you combine those with the fact that Alien is a global phenomenon — it is being anticipated with great excitement across the world — what steps did you take to make this as broadly available as possible on a medium that is beginning to be understood by many audiences?

Brown: Localization and platform delivery present quite a challenge, just because of the broad range of devices and platforms available. That means a lot of preplanning and organization. It is critical to have a close relationship with the publishing studio, and it is extremely exciting that this will be released on such a broad scale. A lot of work has been put in to deliver this across such a broad range of platforms.

We are making it available in 12 languages and on multiple platforms for tethered and mobile headsets. It looks like we could be delivering this experience for up to eight different platforms.

Given the complexity of the endpoints, the challenges of meeting a tight deadline, and doing the VR experience while the movie itself is still in production, what were some of the lessons you learned that you could share with folks who, like you, are pioneering this new medium?

Brown: One area that is important to consider, especially when you are using a 100 percent computer graphics workflow for a 360-degree, is to update your render forecasts constantly so you can understand the render time you will need before delivery.

360-degree video and 4k by 4k stereo can use up a great deal of the render farm’s capacity really quickly. It is important to take that into account and update the forecasts as often as possible.

I mentioned before the importance of reviewing the work in progress in an actual VR headset. Obviously that’s crucial to understanding what the user experience is going to be. Also the scope of artists’ responsibility is much greater, given the larger canvass. It is important to take that into account when you are building your schedule.

Always of paramount importance for anyone creating a 360-degree video is to think about users possibly becoming disoriented or sick as a result of any camera movements you might put in.

So it is always important to view that in the headset and weigh it against a number of different user experiences to make sure it is going to be comfortable for as many people as possible. That is something that you have to pay close attention to. Those are some of the main learning points we have taken away from this production.